DX12 Renderer

A self-directed project built across two semesters outside of regular project hours, covering the full pipeline from framework setup to model loading, texturing, mipmapping and Blinn-Phong lighting with normal mapping.

What did I do?

- Implemented a DirectX 12 rendering pipeline from scratch, including command lists, swap chain management, descriptor heaps, and CPU–GPU synchronization

- Added model loading using Assimp, texture loading with mipmapping, Blinn-Phong lighting, Normal Mapping

- Learned how to utilize Microsoft's DirectX12 Graphics API. Uses AMD's D3D12MemoryAllocator.

Block B – Building the Framework

The first half of the project (starting from November ‘25) was spent purely on getting a working foundation in place. Coming from OpenGL in Year 1 and compute shaders in Godot, DirectX 12’s explicit architecture was a significant jump. I followed Jeremiah van Oosten’s 3dgep tutorials as the primary resource, supplemented by Microsoft’s DX12 samples for filling in gaps the tutorials skipped.

The first visible result was a clear color, then a triangle using Microsoft’s HelloTriangle sample as reference, and eventually a rotating 3D cube using the 3dgep Part 2 tutorial. At that point the entire renderer lived in a single Device class held together by quick hacks, which made it clear that a proper framework had to come first before advancing further.

The remainder of the block was spent building the supporting infrastructure. This meant a ResourceStateTracker for managing GPU memory transitions between states like RENDER_TARGET, PRESENT and COPY_DEST, an UploadBuffer for staging data, and a full descriptor allocation system (DescriptorAllocator -> DescriptorAllocatorPage -> DynamicDescriptorHeap) for handling CPU and GPU descriptor handles across frames. CPU-GPU synchronization was handled through a CommandQueue wrapper managing fences and frame pacing for the triple-buffered swap chain.

Replacing OpenGL’s mutable state model with DX12’s immutable Pipeline State Objects was one of the more conceptually interesting parts of this block: having to pre-compile all rasterizer, blend, depth-stencil, shader and input layout state upfront into a monolithic PSO rather than setting state piece by piece at draw time.

By the end of the block the cube was rendering again, this time properly backed by the framework. No textures or lighting yet, but the groundwork was solid. The cube from the end of the block is visible in the GIF above.

Block C – Features

With the framework in place, the second half of the project (Starting from Feb ‘26) was spent implementing actual rendering features, most of which happened during the Spring Break in a concentrated push.

Model loading came first as seen in the video above, using the Assimp library. Texture loading followed, referencing both the 3dgep Part 4 tutorial and LearnOpenGL’s texture and model loading chapters. After struggling to get the “DamagedHelmet” glTF displaying correctly, my friend Niels walked me through RenderDoc during a Discord call, which revealed the vertices were not being transformed into the right coordinate space. RenderDoc became a regular part of my workflow from that point on.

Resource management was simplified considerably after a friend from Year 2 suggested AMD’s D3D12MemoryAllocator library, similar to VulkanMemoryAllocator. Refactoring to use it also surfaced a memory leak: a texture cache stored in the global namespace which thankfully I was able to track down and fix. I also switched from root constants to CBVs and StructuredBuffers for uploading per-frame and per-object data, which made the renderer considerably more flexible.

Showcased in the video above, Blinn-Phong lighting with point and directional lights was implemented next, referencing LearnOpenGL’s lighting chapters and adapting the equations for HLSL. This was the first time the lighting math properly clicked for me: understanding why Blinn-Phong is an approximation of PBR specular and why the Phong model itself is largely a hack.

Showcased in the video above, normal mapping followed, using the TBN matrix with pre-calculated tangents and bitangents from Assimp. Emissive mapping was straightforward on top of that.

A resource which helped me understand these concepts better was a series of lectures from Freya Holmer that can be found at this link.

Notable features implemented:

- Triple-buffered swap chain with fence-based CPU-GPU synchronization

- Descriptor heap management with per-frame allocation

- Model loading via Assimp, texture loading with mipmapping via DirectXTex

- D3D12MemoryAllocator integration for resource tracking

- CBV and StructuredBuffer support for per-frame and per-object data

- Blinn-Phong lighting with point and directional lights

- Normal mapping and emissive mapping

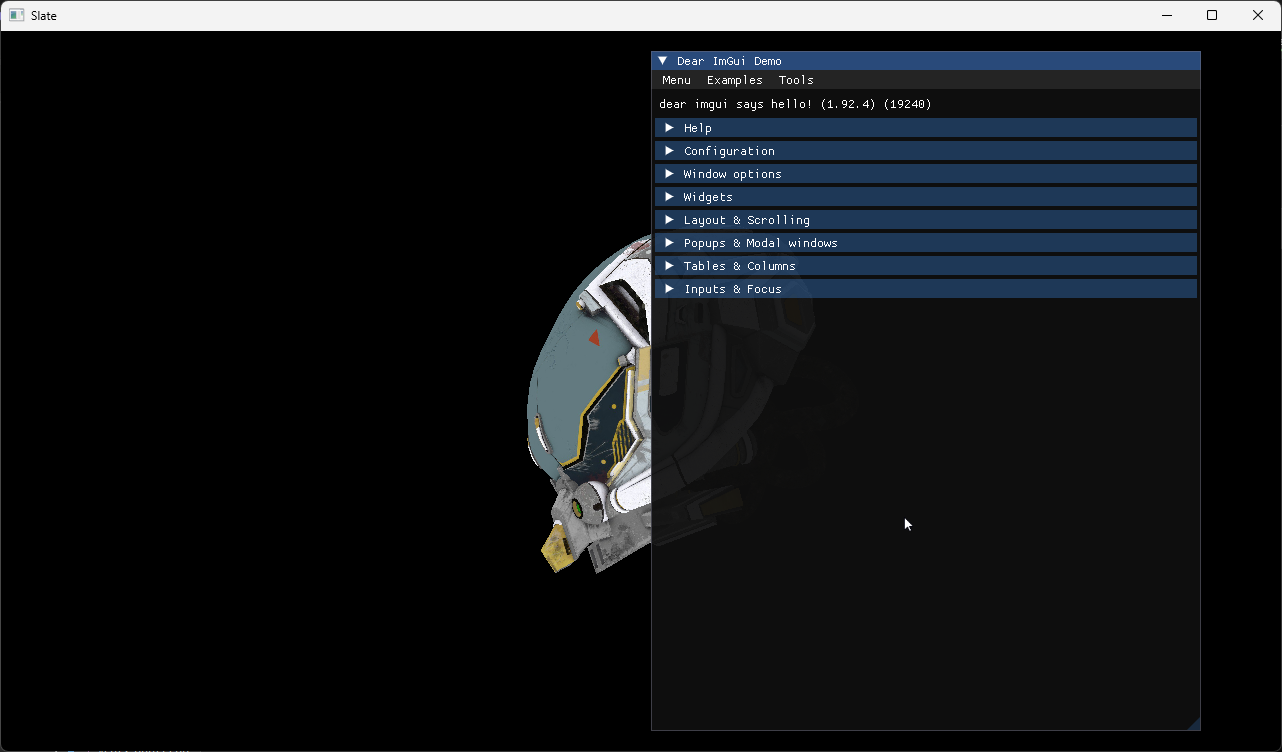

- ImGui and ImGuizmo integration for runtime tweaking

Project Outcome

The result of this project can be seen in higher quality in the video from below: